Data Engineering

Resilient ETL design, orchestration, and performance optimization across legacy and modern stacks.

- Batch and near-real-time pipelines

- Airflow orchestration and migration

- PySpark and distributed data processing

Open to Data & AI Engineering Opportunities

I design and optimize data systems that scale. Today I build production ETL pipelines at NTT DATA and expand into agentic engineering with reliable tool-calling workflows.

3+ years of professional experience in Data Engineering (since June 2022)

Current Focus

My work combines data pipeline reliability with agentic workflow experimentation and production delivery practices.

Resilient ETL design, orchestration, and performance optimization across legacy and modern stacks.

Production-ready software delivery using agentic engineering tools (Claude Code, OpenCode, Codex) with structured workflows, reusable skills, and quality hooks.

Cloud-native delivery practices that keep teams shipping faster without sacrificing observability.

Recent Development

This is the latest product I launched, focused on clarity, trust, and practical user value.

Featured Case Study

Production financial assistant experience built with agentic engineering workflows

A live financial calculator platform delivered in one month using an agentic engineering workflow. Development combined human direction with Claude Code, OpenCode, and Codex to accelerate delivery while keeping production standards.

Challenge

Build a trustworthy financial UX that explains calculations clearly, supports fast iteration, and remains maintainable as new calculators and assistant flows are added.

Solution

Defined scoped implementation loops, used tool-assisted coding sessions for feature delivery, and enforced quality hooks for validation before release. The workflow prioritized explicit tasks, review checkpoints, and reproducible changes.

Roles where I improved data reliability, orchestration quality, and delivery speed.

NTT DATA Europe & LATAM

Designing and maintaining data pipelines across public-sector projects with strong reliability and delivery expectations.

cdmon

Delivered internal analytics and automation capabilities for business and engineering teams.

Additional technical work across web engineering and applied optimization.

Portfolio website built with Astro and Tailwind CSS as a lightweight, performant front-end foundation.

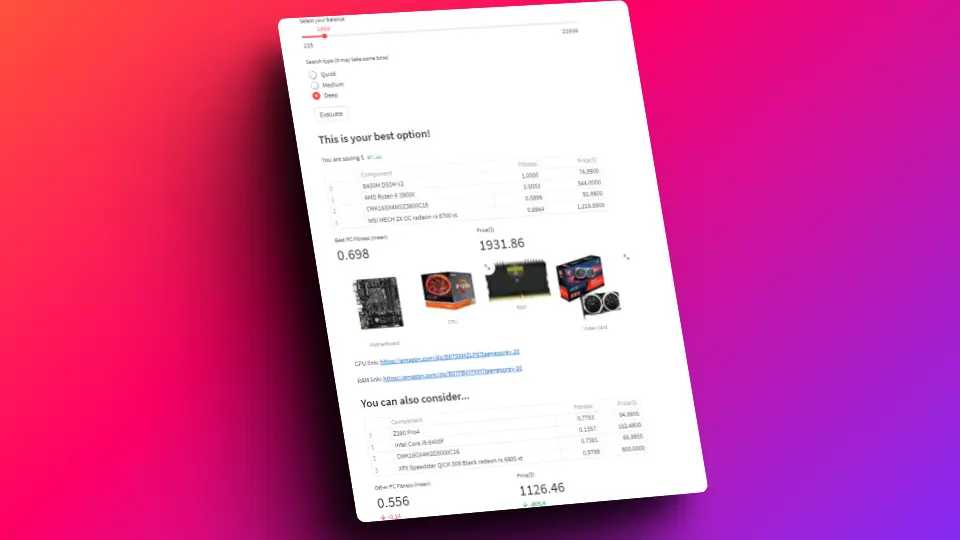

Data science project that combines scraped pricing data, preprocessing, and a genetic algorithm to optimize computer component selection with constraints.

I am an adaptable and pragmatic engineer who enjoys combining analytical rigor with creative problem-solving.

My current focus is building robust data systems and extending that mindset into agentic engineering patterns that are production-ready.

Outside work, I keep learning through programming projects, chess, and challenges that push me beyond my comfort zone.

Let's collaborate

If you are hiring for Data Engineering or Agentic Engineering roles, I can help accelerate delivery while keeping architecture and operations robust.